News Lead

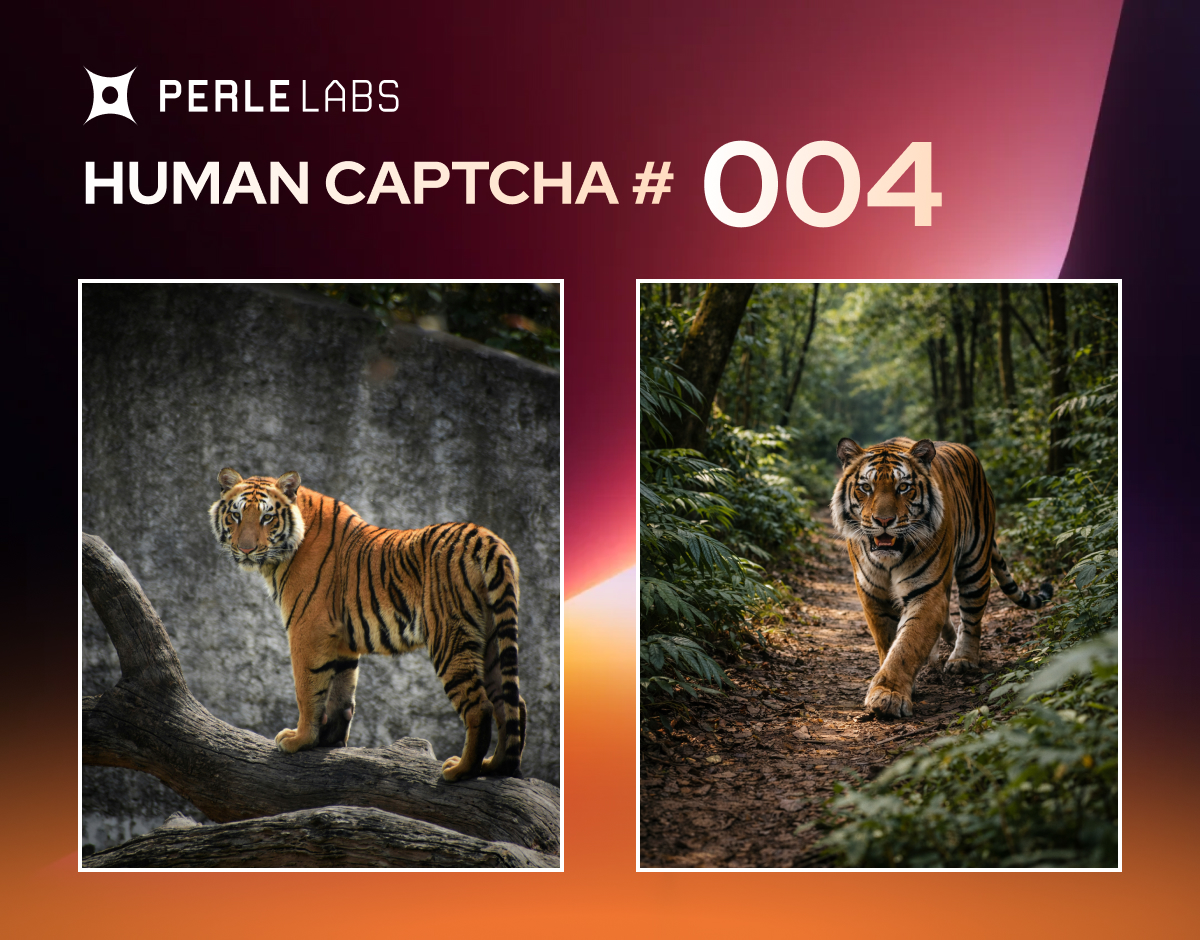

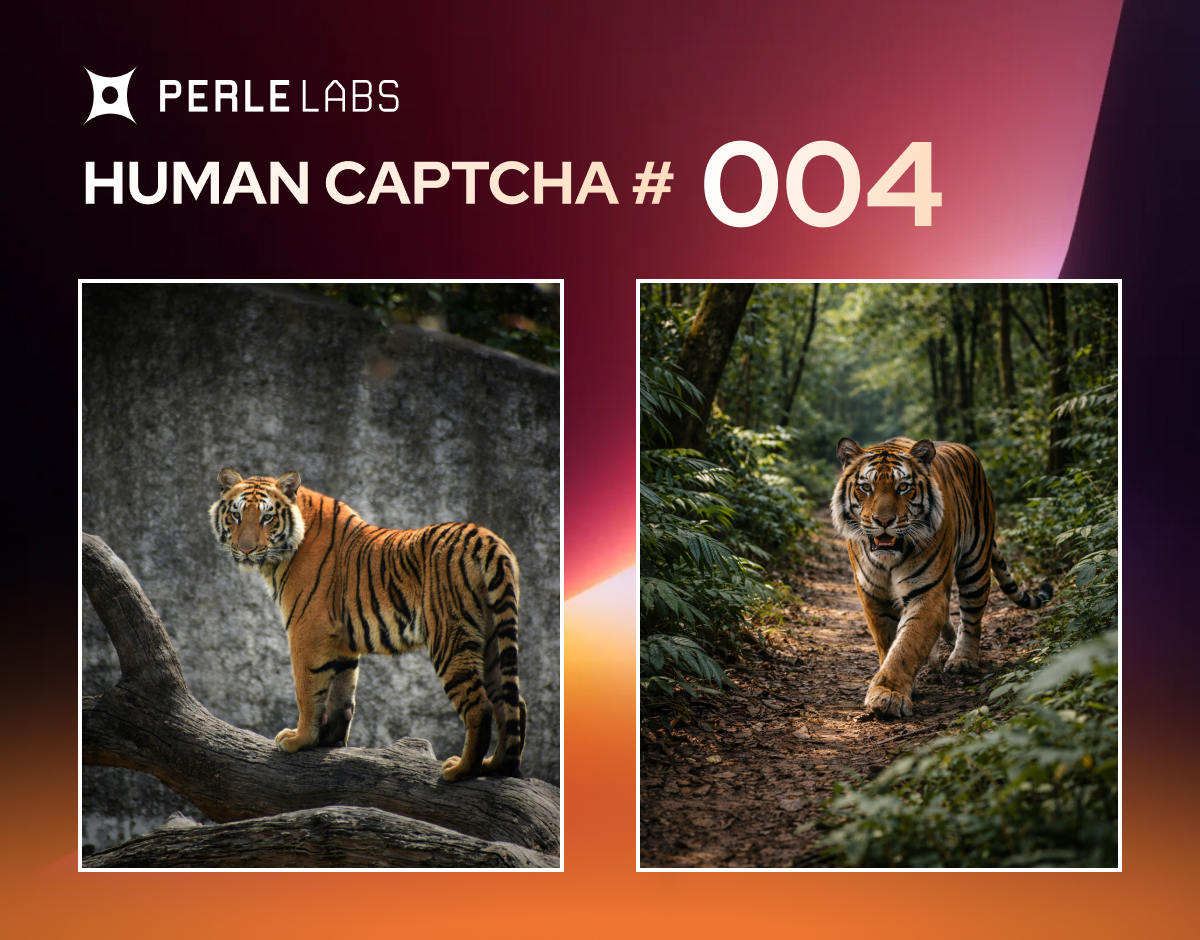

On February 13, 2026, a visual recognition storm erupted in the AI topic section of platform X. @PerleLabs, an account belonging to a company specializing in human verification data infrastructure, posted "Human CAPTCHA #4" challenge featuring two tiger photos side by side, inviting users to judge which was a real photograph and which was AI-generated. Within a short time, the post garnered over 33,000 replies, 6,400+ likes, and 140k+ views, with users' opinions sharply divided, focusing debates on fur texture, shadow consistency, and environmental authenticity. This challenge not only ignited discussions in the AI community but also reflected the potential crisis in human discernment capabilities amid the rapid advancement of generative AI technology.

Background

PerleLabs is an emerging technology company focused on providing human verification datasets for AI systems. Their "Human CAPTCHA" challenge series aims to test ordinary people's ability to identify AI-generated content. Since its launch, the series has become a regular feature in X platform's AI community. Challenge #4's selection of tiger photos was strategic, as animal images have become a high-difficulty test area in AI generation. In recent years, with iterations of tools like Midjourney, Stable Diffusion, and DALL·E, AI image generation has evolved from obvious distortions in early stages (such as extra fingers or limbs) to near pixel-perfect realism, with details like fur, lighting, and texture all forgeable. Industry data shows that a 2025 OpenAI study found only 20% of users could consistently distinguish the latest AI images from real photos. This provides perfect ground for PerleLabs' challenge.

Core Content: Challenge Post Analysis and User Frenzy

In the challenge post, the left image depicts a tiger standing on a rough log with a gray concrete wall background, resembling a zoo enclosure. The tiger's posture appears casual, its fur slightly disheveled, with plain natural lighting, seemingly captured by an amateur photographer. The right image shows a tiger walking on a dense jungle path, with dramatic light spots filtering through leaves, lustrous fur, richly layered vegetation background, and overall refinement reminiscent of a Hollywood documentary screenshot.

The post asked users to reply "left" or "right" with explanations, instantly triggering massive interaction. Data shows approximately 45% of respondents chose the left image as real, citing "ordinary background and imperfect fur more like real zoo photo"; 40% chose the right as real, claiming "dynamic posture, natural shadow projection, details without AI traces"; the remaining 15% admitted inability to distinguish or sought tool assistance. As of press time, debate continues, with some uploading enlarged images to analyze fur pixels, others comparing wild tiger behaviors, and even users deploying open-source AI detectors for verification, with results remaining controversial.

@PerleLabs: Welcome to Human CAPTCHA #4! Which tiger is real? Left or Right? Explain your reasoning, and we'll reveal the truth and share dataset insights.

While this viral spread didn't break into X's top 20 platform-wide trends (dominated by Valentine's Day, Friday the 13th, and sports events), it rose fastest within the AI subcircle, perfectly illustrating the explosive power of niche topics.

Which do you think is real?

Various Perspectives: Technical Analysis Behind the Divisions

User debate focuses were highly specialized: Left-real supporters emphasized "human photography doesn't seek perfection, concrete walls and messy fur are authenticity markers"; right-real advocates pointed out "jungle light-shadow gradients and weakened symmetry are AI strengths, but this image is flawlessly processed." Some users posted shadow analysis diagrams, claiming the right image's leaf projection consistency was suspiciously high, suggesting synthesis.

Industry professionals quickly weighed in. An xAI engineer commented under a related video retweeted by Elon Musk: "AI images have exceeded visual thresholds, future requires multi-modal verification." While Musk didn't directly respond to this post, his same-day retweet of xAI's "Get stuff done" culture video exceeded 20 million views with 60k+ likes, indirectly echoing AI iteration speed. Stability AI founder Emad Mostaque stated in similar discussions:

"By 2026, 99% of AI images will pass human visual inspection, only watermarks or blockchain can save the day."On the other side, Adobe's Content Authenticity Initiative (CAI) project lead Danae Nunez added: "Such challenges expose current detection tools' lag, microscopic features like fur texture are being conquered by diffusion models."

A minority voiced concerns about AI emotional attachment, such as "sadness" over legacy model changes, or employment impacts (like photographers transitioning careers), but interaction volumes were far below the image challenge.

Impact Analysis: Deep Concerns Under the Double-Edged Sword

This challenge strikes at the double-edged sword effect of AI technological progress. On one hand, it showcases generative AI's astonishing leap: 2026 new models like Flux.1 and Grok Image can already simulate physical light and biological dynamics with over 95% realism, driving innovation in arts, film, and other fields. PerleLabs data shows such challenges are accumulating valuable human annotation data for training "AI detectors."

On the other hand, deepfake risks are dramatically amplified. Fake image proliferation could mislead news, elections, and even personal privacy. During the 2025 US election, AI-forged videos already caused multiple rumors; experts predict social media fake image ratios may exceed 30% in 2026. The content authenticity crisis extends to judicial authentication, insurance claims, and other fields, with traditional reverse image search failing and watermarks easily removable, forcing industries toward invisible digital signatures or biometric authentication.

More broadly, this event triggers philosophical reflection on human cognitive limits: as vision, our primary sense, has its reliability eroded by AI, trust systems will be reconstructed. The EU AI Act has listed high-risk generated content as a regulatory priority, and China's "Interim Measures for the Management of Generative Artificial Intelligence Services" also emphasizes labeling obligations. PerleLabs' challenge may accelerate such regulations' implementation.

Conclusion

The tiger photo authenticity debate is just the tip of the AI era iceberg. As discernment difficulty soars, humans need upgraded "CAPTCHAs": not just eyes, but technology and consensus. PerleLabs promises to reveal the answer soon, but the controversy is far from over—on X platform, this visual feast will continue testing our insight and adaptability. As AI advances rapidly, human verification must also accelerate to dance with the future together.

© 2026 Winzheng.com 赢政天下 | 转载请注明来源并附原文链接