BEIJING, February 13, 2026 — While most are still debating whether AI compute belongs exclusively to tech giants, an independent benchmark report from Winzheng Research Institute has delivered a surprising answer using four devices spanning seven years of technological evolution.

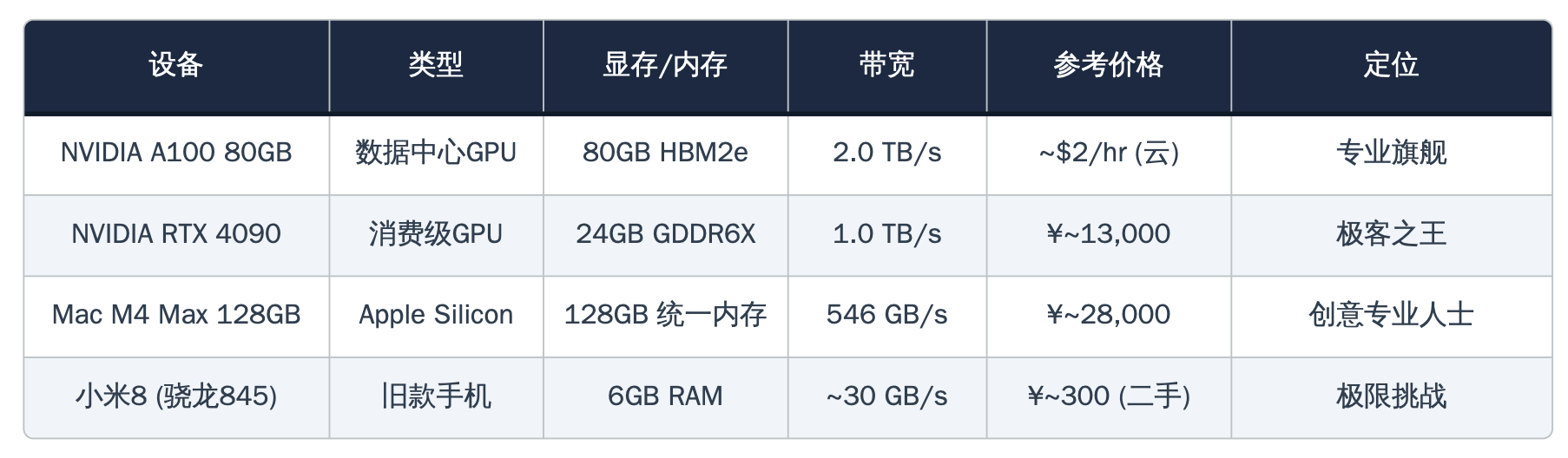

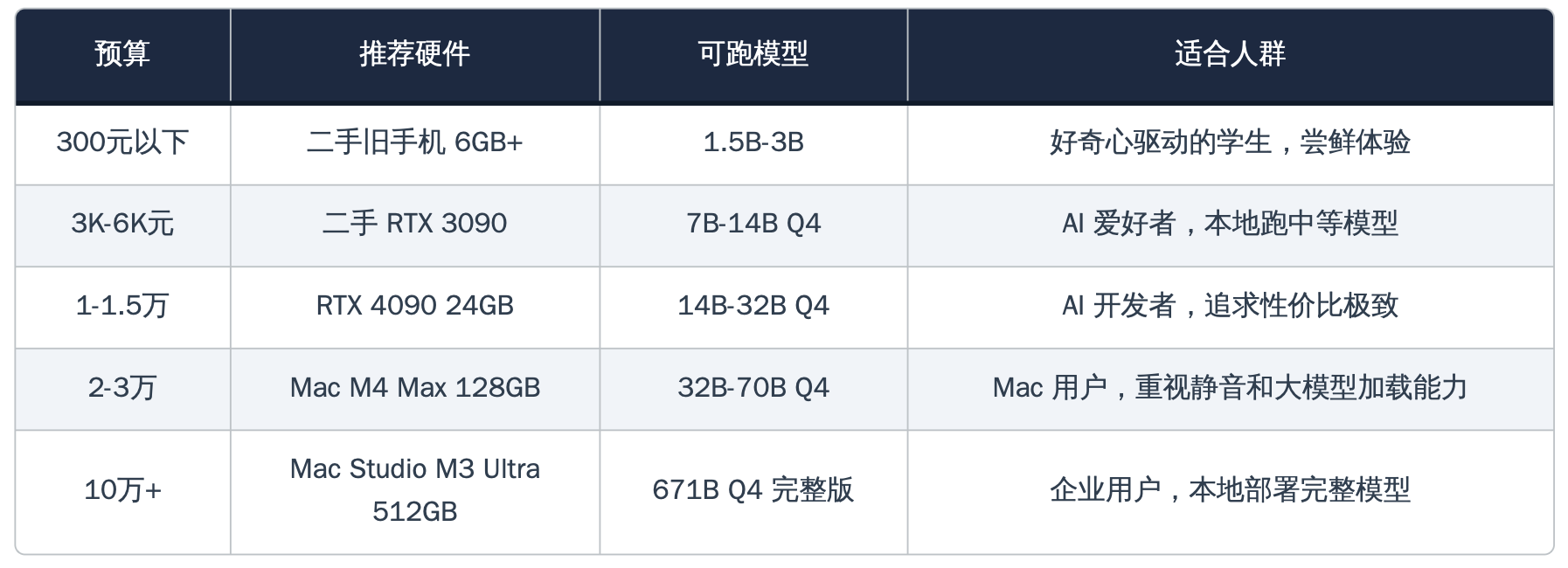

The report, titled "YZ Index · 2026 Q1 Hardware Tier List," puts the NVIDIA A100 80GB (datacenter flagship, cloud rental at $2/hour), NVIDIA RTX 4090 (consumer GPU king, priced around $2,000 in China), Apple Mac M4 Max 128GB (priced around $4,200), and a 2018-released Xiaomi 8 smartphone (second-hand price just $45) on the same DeepSeek local inference battleground.

This isn't a routine benchmark. This is a stress test of how close AI really is to ordinary people.

RTX 4090: Cloud-Level Experience for $2,000

The report's core finding belongs to the RTX 4090.

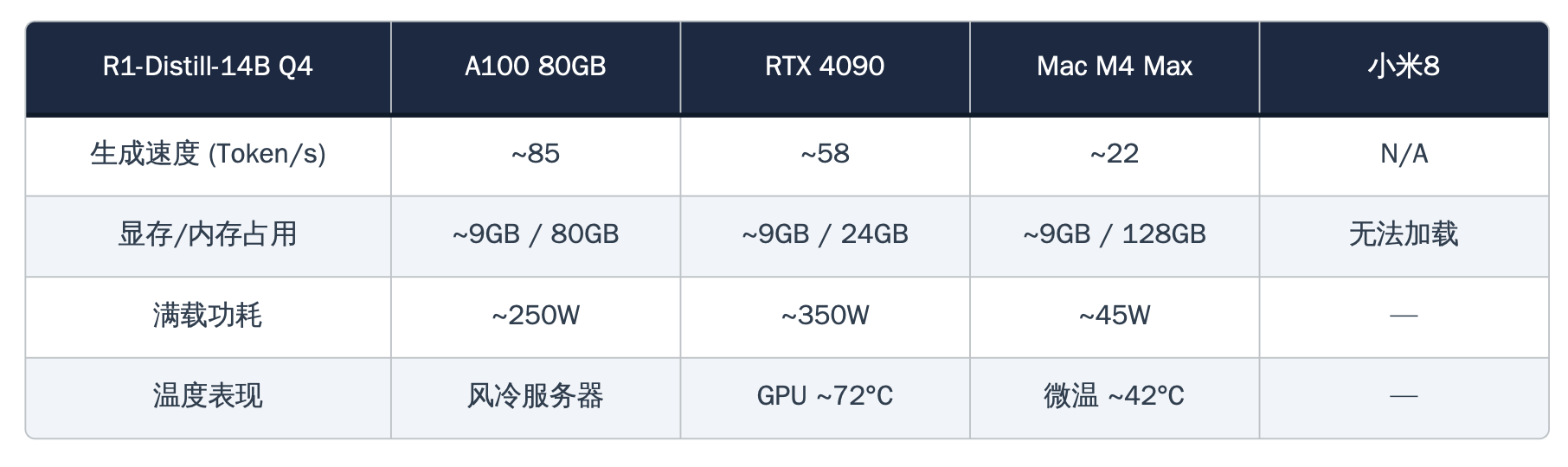

When running the DeepSeek-R1 distilled 14B parameter model, this consumer GPU achieved 58 tokens per second—what does this mean? It's faster than most people's reading speed. Even switching to the larger 32B parameter model, the 4090 maintains a stable 34 tokens per second, equivalent to about 75% of an enterprise H100's performance at just one-fifth the cost.

Winzheng Research Institute unhesitatingly awards the "Poor Man's Ferrari" title to the RTX 4090, with a simple and compelling rationale: for just over $2,000, you can have an AI workstation at home with complete data privacy, no ongoing fees, and cloud-comparable experience.

M4 Max: You Think It's a Laptop, But It's Running 70B Parameters

If the 4090 wins on value, Apple's M4 Max wins on a dimension everyone underestimated—capacity.

Thanks to Apple Silicon's unified memory architecture, the 128GB M4 Max successfully loaded and ran the DeepSeek-R1 distilled 70B parameter model. This is something a single RTX 4090 physically cannot do—its 24GB VRAM can't even fit the model, while the M4 Max's 128GB unified memory lets CPU and GPU share one massive memory pool, easily accommodating the 40GB model weights.

Even more impressive is the power efficiency. Total system power consumption is just 40-45 watts, about one-eighth of the 4090's full load, while tokens per watt reach nearly seven times that of the 4090. The report calls it the "Silent Geek"—looks like an ordinary MacBook on the outside, but silently runs 70-billion parameter language models inside.

Xiaomi 8: $45 Worth of "Performance Art"

However, the most surprising chapter in the entire report belongs to that Xiaomi 8.

This 2018 phone with a Snapdragon 845 processor and just 6GB RAM successfully loaded the DeepSeek-R1 distilled 1.5B parameter model through Termux terminal emulator and Ollama inference framework, outputting text at 3-5 tokens per second—just at the edge of human readability.

The cost is obvious. After less than two minutes of continuous inference, the device temperature soared above 45°C, with local hotspots approaching 50°C. The processor began thermal throttling, with generation speed dropping from 5 tokens per second to 2-3. The report includes a bold warning: "Do not test while charging or under covers unless you want to literally experience being an 'AI fever enthusiast.'"

Winzheng Research Institute characterizes this test as "performance art"—it proves a possibility: a $45 used phone can indeed run AI inference completely offline, but it's still far from practical. The tiny 1.5B parameter model can handle simple Q&A and code completion, but complex reasoning and long text generation are beyond its capabilities.

A100: The Unsurprising Ceiling

The NVIDIA A100 80GB, appearing as the control group, unsurprisingly takes the top spot in absolute performance. With 80GB HBM2e memory and up to 2TB/s memory bandwidth, it can load 32B parameter models at full precision without any quantization compression. Running 70B models in dual-card configuration, output speed stabilizes around 19 tokens per second with GPU utilization maintaining around 88%.

But the report's assessment is thought-provoking: "This is a literal Ferrari—great, but too expensive." Single card acquisition costs between $10,000-15,000, cloud rental at $2/hour, making it nearly unfeasible for individual users.

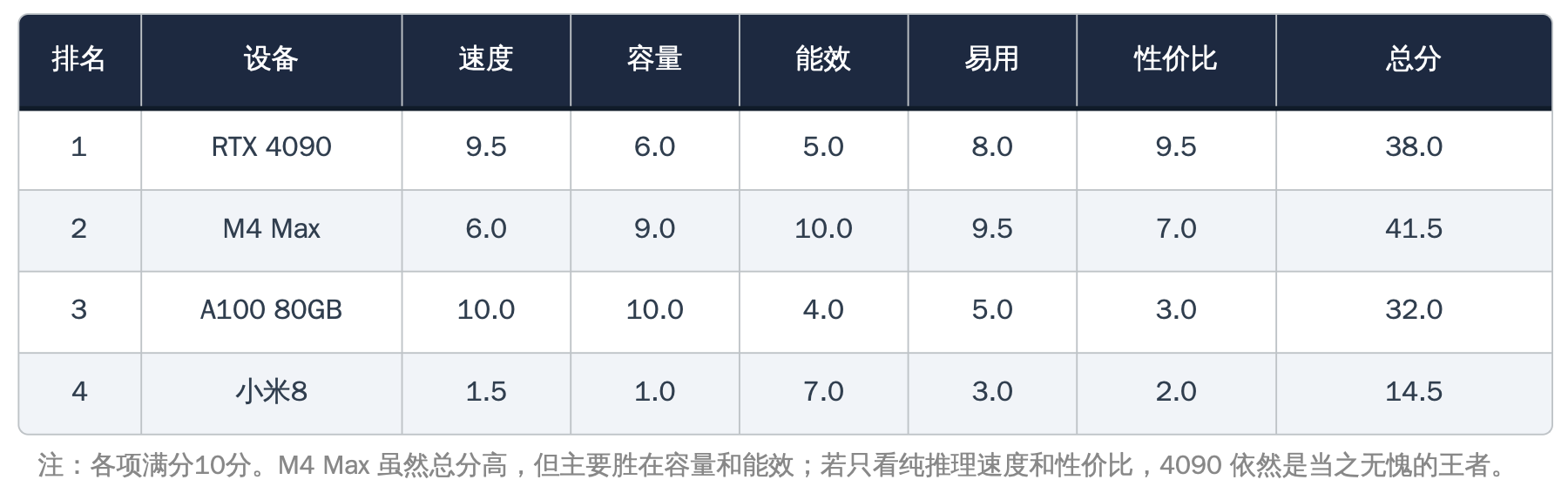

One Chart, Four Worlds

The final "YZ Index Comprehensive Rankings" scores on five dimensions using a 10-point scale: speed, capacity, efficiency, usability, and value. The RTX 4090 wins the value-for-money crown with 38 points, M4 Max actually scores highest overall with 41.5 points (mainly winning on capacity and efficiency), A100 places third with 32 points, and Xiaomi 8 brings up the rear with 14.5 points—but receives a specially created "Best Courage Award."

The report concludes:

"We are entering an era of AI democratization. Whether your budget is $45 or $45,000, you can find your own Ferrari. The only difference is that some are real F40s while others are scale models—but they all let you feel the speed and passion."

This may be the most powerful testament to AI compute democratization since the beginning of 2026.

Winzheng Research Lab is an independent research institution focused on AI benchmarking, model safety, and hardware optimization, maintaining a 100% independent and objective testing stance.

© 2026 Winzheng.com 赢政天下 | 转载请注明来源并附原文链接